UltraRAG Development Practice

Branch-based RAG Workflow

This section will guide you to implement a RAG reasoning workflow with branching decision capability: Search-o1.

prompt/search_o1_reasoning.jinja

Corresponding tool function:

searcho1_reasoning_indocument: Reason after reading documents

This tool injects search results into the model input to help the model analyze retrieved results and continue reasoning.

Create the template

prompt/search_o1_reasoning.jinja

Corresponding tool function:

searcho1_reasoning_indocument: Reason after reading documents

This tool injects search results into the model input to help the model analyze retrieved results and continue reasoning.

Create the template  prompt/search_o1_refinement.jinja

The corresponding tool function implementation:

To allow this tool to use an independent template parameter

prompt/search_o1_refinement.jinja

The corresponding tool function implementation:

To allow this tool to use an independent template parameter  servers/prompt/parameter.yaml

This avoids configuration overwriting after build when multiple prompt tools share the same template parameter.

search_o1_insert: Insert documents as search results into the reasoning context

This tool does not depend on a template; it directly appends the search results as

Whether to continue retrieval is decided by whether the end tokens

Extracts the query string inside the

servers/prompt/parameter.yaml

This avoids configuration overwriting after build when multiple prompt tools share the same template parameter.

search_o1_insert: Insert documents as search results into the reasoning context

This tool does not depend on a template; it directly appends the search results as

Whether to continue retrieval is decided by whether the end tokens

Extracts the query string inside the  examples/search_o1.yaml

Open the generated

examples/search_o1.yaml

Open the generated  examples/parameter/search_o1.yaml

examples/parameter/search_o1.yaml

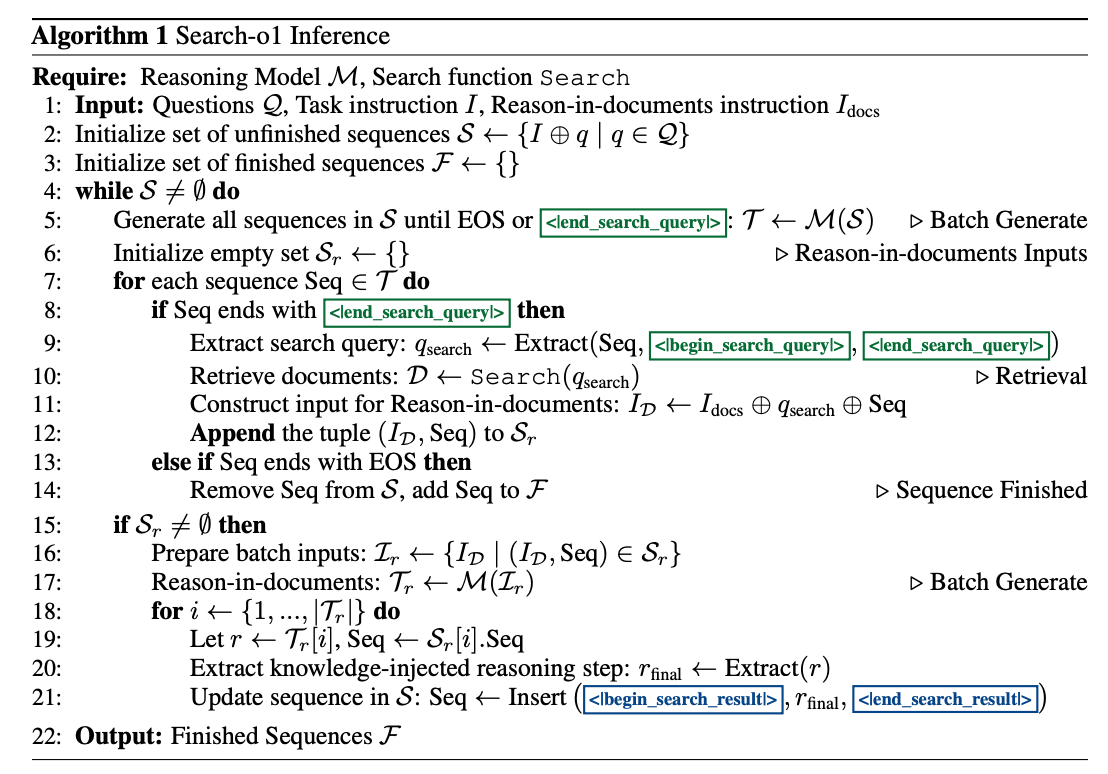

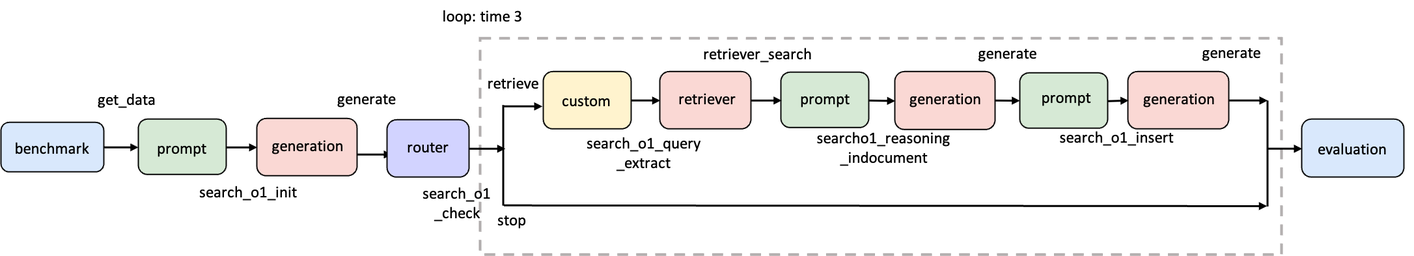

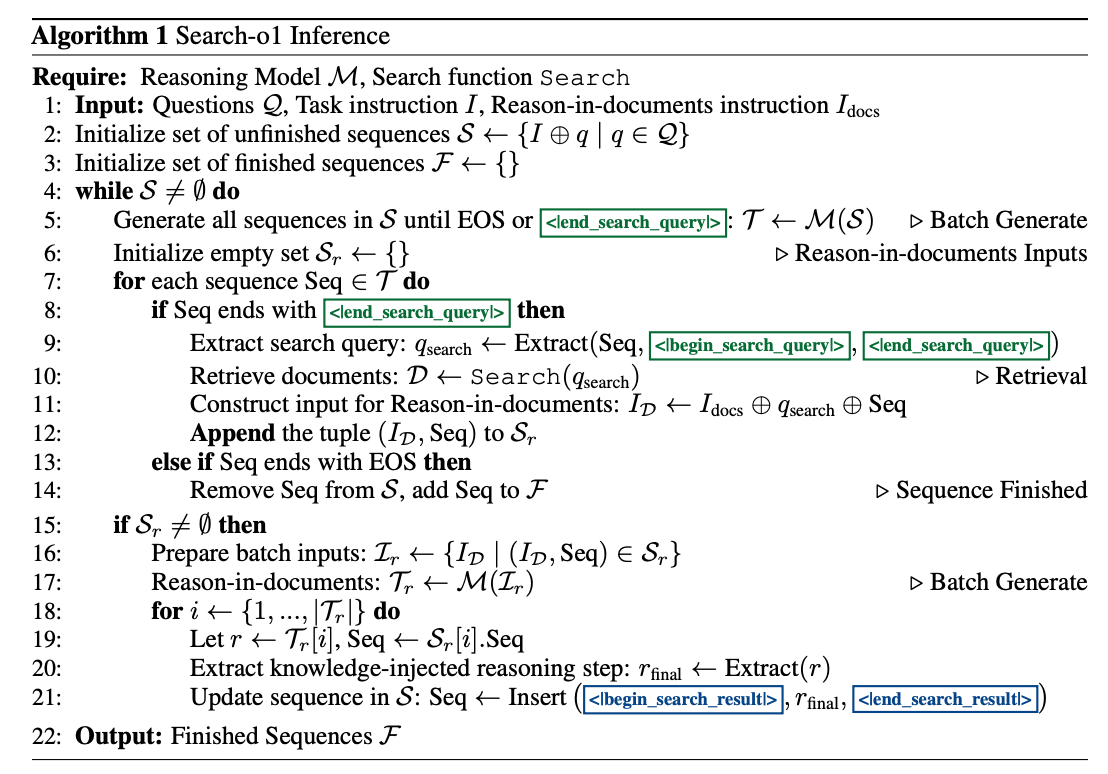

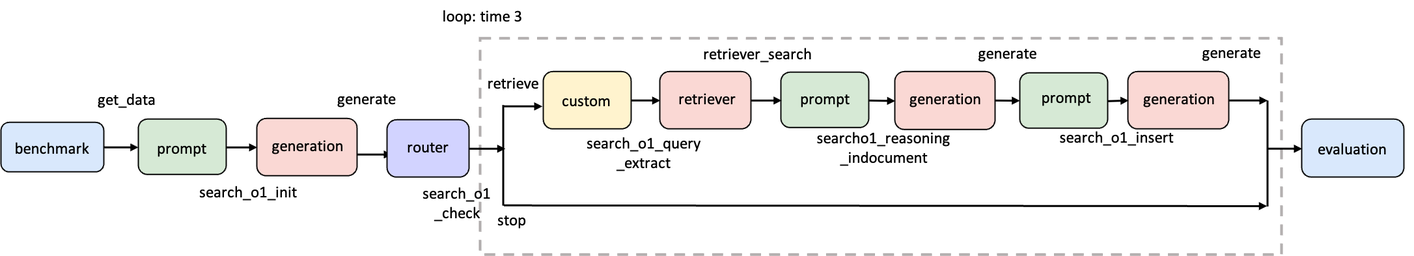

Paper: https://arxiv.org/pdf/2501.05366The core idea of Search-o1 is to let the LLM autonomously decide when it lacks knowledge during reasoning, proactively generate search queries to call an external retrieval module for supplementary documents, and then—via a Reason-in-Documents module—analyze and refine long retrieval results, extract useful information, and inject it back into subsequent reasoning to reduce noise.

Step 1: Define Workflow Structure

First, let’s review the Search-o1 algorithm flowchart:

Step 2: Implement Necessary Tools

Step 2.1: Implement Prompt Server

In the Search-o1 workflow, the Prompt Server mainly undertakes two tasks:- Build the initial prompt (with search capability instructions)

- Orchestrate the alternating process of search → reasoning, including inserting search results and performing document analysis

prompt/search_o1_reasoning.jinja:

prompt/search_o1_reasoning.jinja

prompt/search_o1_reasoning.jinjaservers/prompt/src/prompt.py

prompt/search_o1_refinement.jinja:

prompt/search_o1_refinement.jinja

prompt/search_o1_refinement.jinjaservers/prompt/src/prompt.py

reasoning_indoc_template, remember to explicitly register it in servers/prompt/parameter.yaml:

servers/prompt/parameter.yaml

servers/prompt/parameter.yaml<|begin_search_result|>…<|end_search_result|> to the existing prompt:

servers/prompt/src/prompt.py

Step 2.2: Implement Router Server

Add inservers/router/src/router.py:

servers/router/src/router.py

<|im_end|> or <|end_search_query|> are present.

Step 2.3: Implement Custom Server

Add inservers/custom/src/custom.py:

servers/custom/src/custom.py

<search> tag from the generated text.

Step 3: Write the Pipeline Configuration

Following the above, createexamples/search_o1.yaml:

examples/search_o1.yaml

examples/search_o1.yamlStep 4: Configure Pipeline Parameters

Run:examples/parameter/search_o1.yaml and modify benchmark/retriever/generation as needed (or set defaults in each Server’s parameter.yaml before build):

examples/parameter/search_o1.yaml

examples/parameter/search_o1.yaml